Here's a pattern I keep seeing across healthcare organizations.

A team gets excited about an AI use case. Leadership greenlights it. There's a kickoff with 20 people in the room. Everyone agrees this will be transformative.

Eighteen months later, the project is quietly cancelled. Or "reprioritized," which is healthcare's polite version of the same thing.

What went wrong? Nobody planned the first 90 days.

The projects I've seen actually make it to production share a similar structure in those early months. I've been collecting notes on what they have in common, and it comes down to a phase by phase checklist.

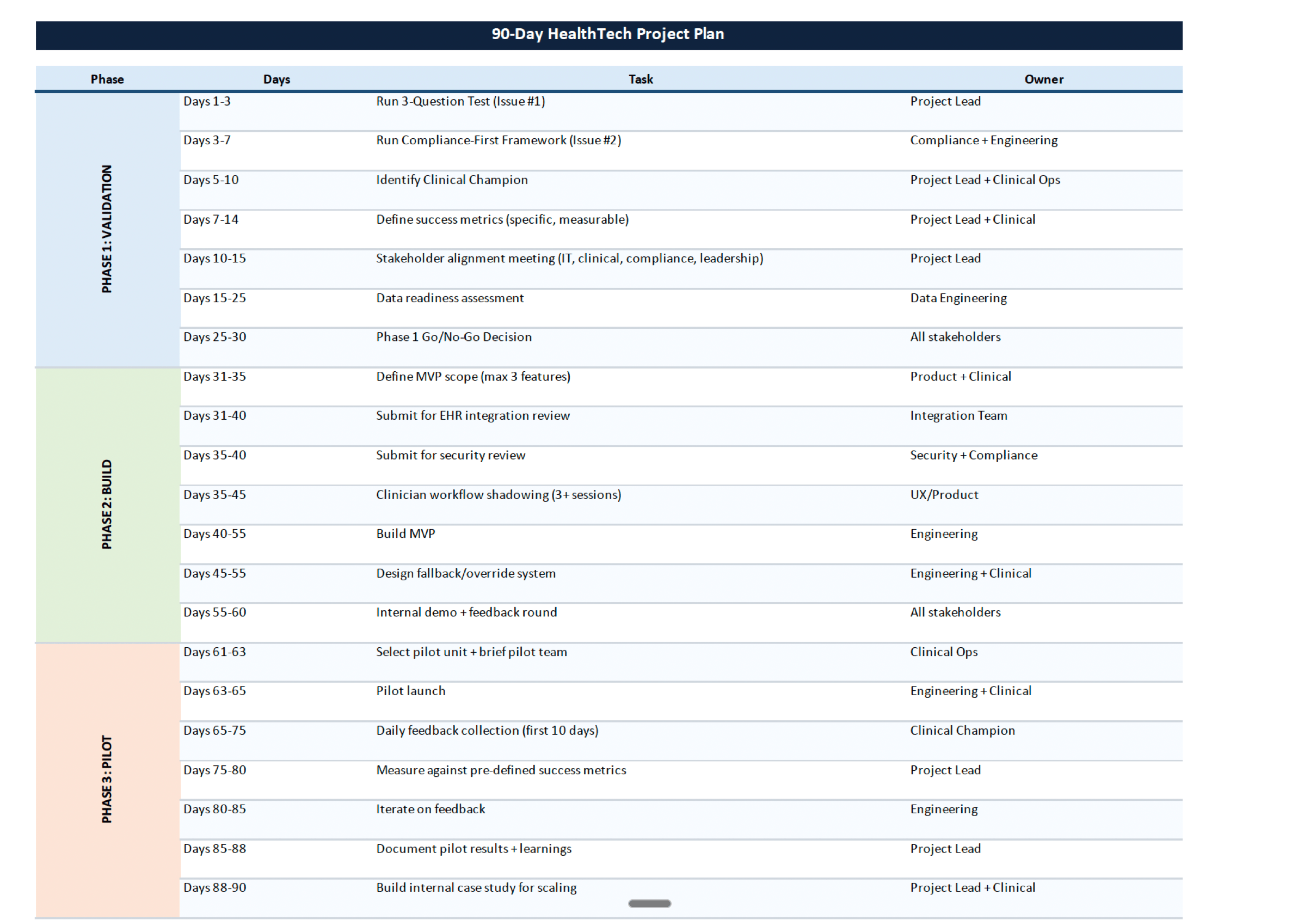

PHASE 1: DAYS 1-30, VALIDATION

The point of this phase is simple: figure out if the idea is worth pursuing before you spend real money on it.

The biggest mistake in healthcare AI is picking the wrong problem. Not the wrong model. The wrong problem. I've watched organizations start building because the technology felt exciting, then realize three months in that nobody on the floor actually cared about the thing they built.

What to get done in the first 30 days:

Run the 3-Question Test (from Issue #1): Is this a real clinical or operational pain point? Can we measure improvement? Do we have the data?

Run the Compliance-First Framework (from Issue #2): Map data flows, check security perimeters, audit governance. Before any code.

Find a clinical champion. Not a VP who signed off on a slide deck. Someone on the floor who'll push for adoption when it gets hard. Without that person, the project dies at pilot.

Define what success looks like. A number. A measurable outcome. Not "improve efficiency." If you can't describe success concretely before building, you won't know if you achieved it after.

Get IT, clinical, compliance, and leadership in one room. Once. Before anything else. The number of projects that stall because these groups were never on the same page from the start is wild.

Check whether the data actually exists. In a usable format. Clean enough. Accessible within your security perimeter. I've seen this single step save organizations months of work that would have led nowhere.

If any of these checks fail, stop. Fix the gap first. Building a good solution on a broken foundation is the most expensive mistake in this space.

PHASE 2: DAYS 31-60, BUILD

Build the smallest version that proves the concept works.

Not the full vision. Not the roadmap. The smallest useful thing a real clinician can try and give you honest feedback on.

What to get done in days 31 through 60:

Define MVP scope. If version one has more than 3 features, it's too big. What's the single most important capability? Ship that.

Start EHR integration work now. Not after the model is built. Integration with Epic, Cerner, MEDITECH, whatever your org uses, is almost always the longest wait. I know teams that built a model in 4 weeks and then sat around for 6 months waiting on integration.

Design for where the clinician already works. The AI output needs to appear inside the EHR, at the bedside, in the flow they already follow. If your tool means opening a new app or adding steps, people won't use it.

Build the fallback. What happens when the AI is wrong? When it's down? Clinicians need a fast way to override. This is patient safety, not a feature request.

Submit for security review early. Not after the build. The review takes time, and if you wait, you'll just be sitting around.

Shadow clinicians before you finalize the interface. Watch how they actually work. Not how the diagram says they work. That gap between the two is where most tools fail.

PHASE 3: DAYS 61-90, PILOT

Put it in real hands. Learn fast.

One unit. One department. Clinicians who volunteered. Small enough to learn from, large enough to matter.

What to get done in the final 30 days:

Pick the pilot unit carefully. Supportive manager, open minded clinicians, clear use case. Set it up to succeed.

Collect feedback daily for the first two weeks. Not a survey at the end. Short daily check-ins. "What worked? What didn't? What confused you?" Ten days of real usage feedback is worth more than six months of planning.

Measure against the success metrics from Phase 1. If you're missing them, figure out whether it's a model problem, a workflow problem, or an adoption problem. Different root cause, different fix.

Write everything down. What worked, what failed, what surprised you. This is what you'll use to make the scaling argument later.

Iterate before you scale. Don't roll out hospital wide after two good weeks. Use the remaining time to fix what's broken. Let clinicians see their feedback actually changed something.

Build the internal case study. By Day 90, you need the narrative: "Here's what we tried, what happened, and why we should expand." That's what unlocks the next round of funding.

WHY 90 DAYS

It forces you to make decisions instead of scheduling more meetings.

Most 18-month timelines aren't 18 months of building. They're 3 months of meetings, 4 months waiting on approvals nobody requested early enough, 2 months rebuilding after the requirements shifted, and a pilot stuck in committee limbo.

Plan the first 90 days well and you either have a working pilot with real data, or you know the project should be killed before you burn a full year on it. Either outcome saves you time and money.

For context, here's how the last four issues fit together:

Issue #1: Should we even start this? (3-Question Test)

Issue #2: Are we safe to build? (Compliance-First Framework)

Issue #3: Are we buying the right tool? (5 Vendor Questions)

Issue #4: How do we actually ship? (This checklist)

That's the playbook so far. More coming.

- Guryash

P.S. What would you change about this? What am I missing? I want to hear it.

Bonus: 90-Day Healthcare AI Deployment Tracker

This tracker is exclusive to the email edition of HealthTech Singh.

Link to the tracker :- AI Deployment tracker

Want more? Follow me on LinkedIn where I share daily insights on healthcare AI implementation: linkedin.com/in/guryashsingh